Healthy Internet Project

Dec 2019 - Nov 2020

Role

Lead Designer

User Researcher

Occasional Git Committer

Tools

Figma

HTML/CSS/JS

Netlify

Team

Yael Eiger

Justin Kosslyn

Renae Reints

Connie Moon Sehat

Alan Bellows

Fun Fact

...

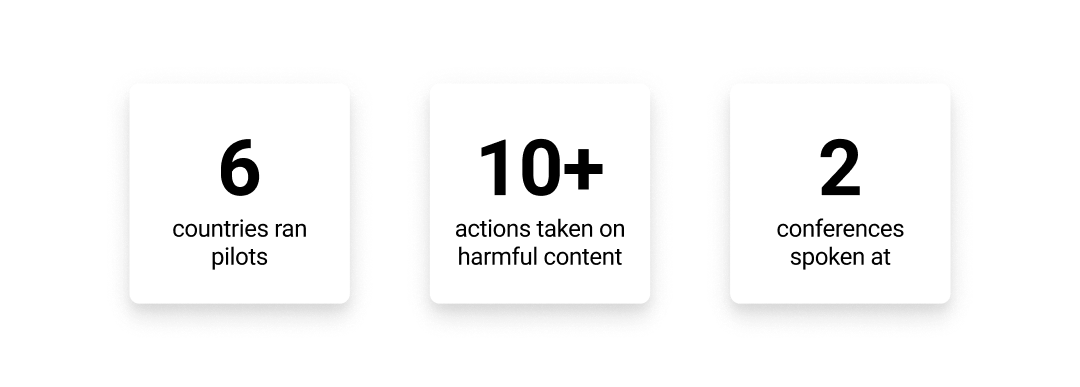

A tool to facilitate crowdsourced "flagging" of digital mis/disinformation with pilots in 6+ countries and 5 languages.

The Healthy Internet Project is a unique product-centric team housed at the larger non-profit of TED Conferences.

The Challenge

Not many people know that TED has created and supported communities of thousands of global volunteers to help in this mission of spreading ideas. From TED Translators to TED Fellows, this scale of human energy and motivation has allowed us to try a relatively unexplored angle at solving internet health issues: crowdsourcing. Over the past year, we built a browser extension that allows people to actively flag harmful content they come across, as well as surface the helpful, hidden gems on the web.

Discovery: Immersing in a new space

Being new to the journalism and news legitimacy space, I wanted to dive into the nuances of conversations happening. Luckily, we were in NY and a part of many of these communities.

Preliminiary User Research

Conversations with 8 folks from the TED community about their digital news experiences, frustrations, and worries.

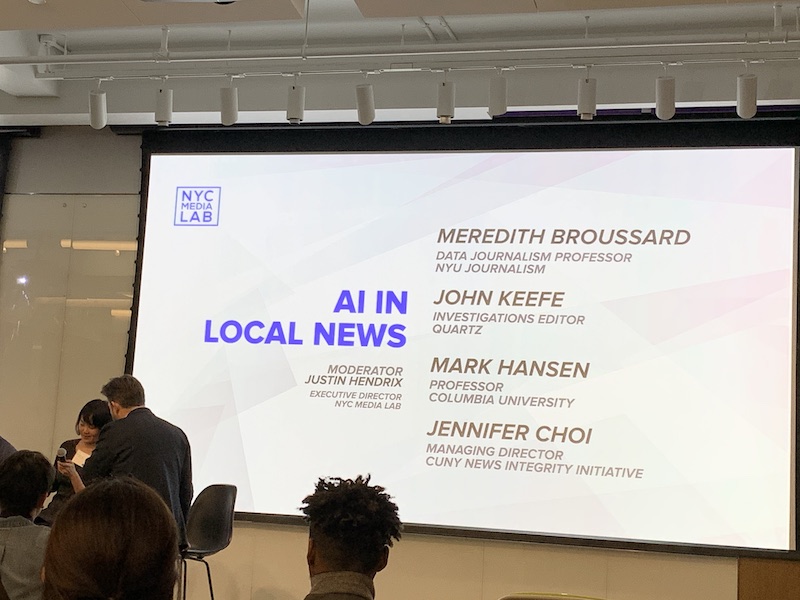

Conferences and Workshops

I was completely humbled to sit back and learn in NY's best spaces to discuss all things misinformation: Disinfo2020, NewsQ, News '20, CredWeb, and a First Draft Coalition Simulation.

TED Lunch & Learns

My manager, Justin, hosted bi-weekly lunch and learns for the rest of the TED office to learn about this space.

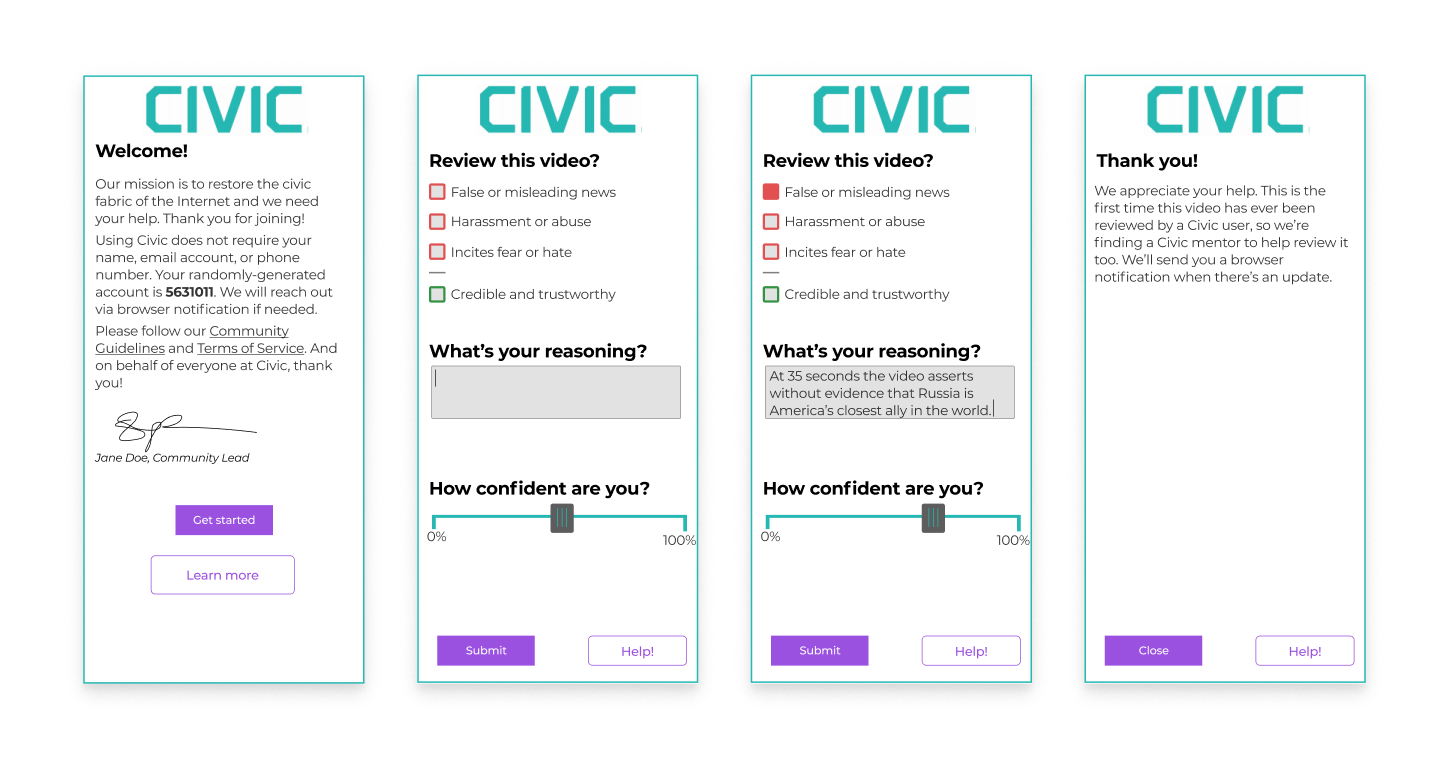

Testing a hypothesis

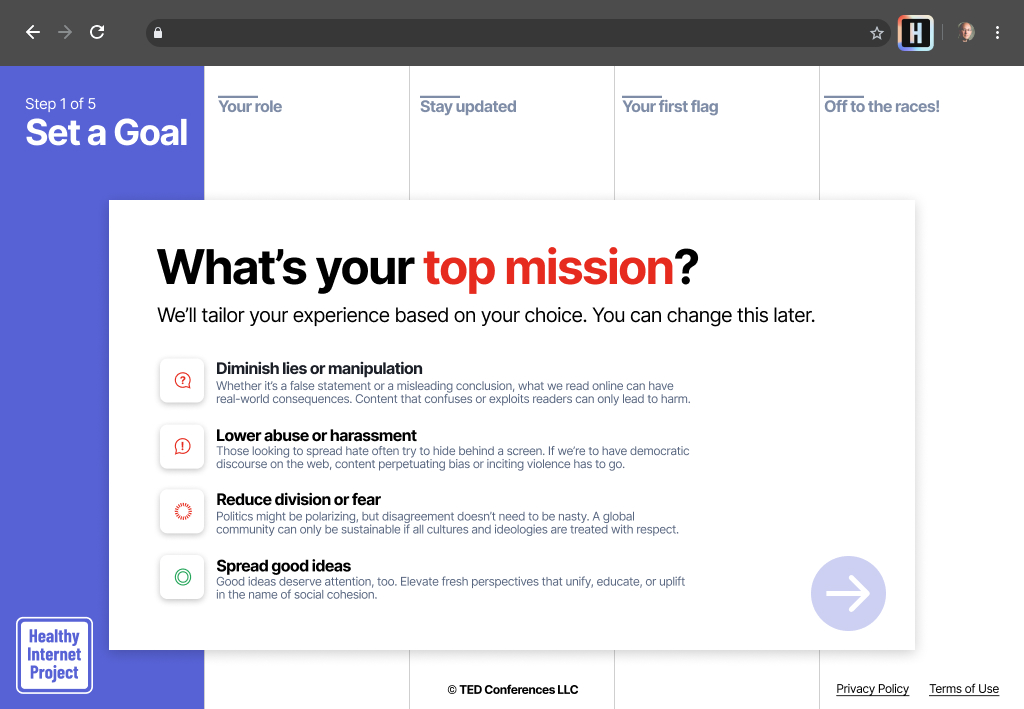

We started with these simple mockups that I inhereted as a skeleton. Starting here, we used this onboarding as a frame to get people's reactions to the crowdsourcing concept.

Insight: Privacy is key

Early on, we talked to folks who were worried about the consequences of flagging content coming back to hurt them. What if a non-Democratic government got a hold of all the users in their country monitoring misinformation in the region? This informed a core product feature which has had many downstream effects: full anonymity. We don’t collect email addresses, names, or phone numbers, instead relying on a randomly generated User ID. This has created some difficulties around how we engage with and learn from our community. For example, we can’t share an email newsletter update when we’ve successfully led to a content takedown. Instead, we had to build a notification system inside of the browser. Similarly, creating platforms such as Slack groups to let the community talk to each other, goes against our ethos.

Paper prototyping

Another large assumption was the mechanism by which we ask people to rate or "label" content. We started with sliders, similar to a 5 star rating input, but I wondered how people would naturally mark content if given more diverse options. I printed some basic "UI elements" for labeling such as Likert checkboxes, free text fields, pre-written tags, and even emojis. There was also a blank card which they could fill with their own label.

Takeaways

Emojis are not always internationalizable! We nixed this input method since certain emojis can be read sarcastically in different cultures, depicting the opposite of intended meaning.

There was no strong desire to create "tags" of their own. Pre-written tags describing content, like "conspiracy theory" or "misleading" seemed to be enough.

Usability Testing

Insight: Mission Matters

We learned early on that people interested in making the internet a healthier place have probably been burned in the past by it. I talked to a professor in a Middle Eastern country that worried about posting their lecture videos online because local trolls may make spoof or parody videos from the files. I talked to a Biology PhD candidate in Europe who comes across science misinformation in her field regularly and has a strong incentive and passion to remove it. These folks had real-life experiences and motivations that we wanted to work off of. So, in the first step of the onboarding, we ask the “mission” of the user such as “diminish lies or manipulation.” We then use this information throughout the experience to remind them of their motivation and goal.

Insight: Feeling heard

Another trend we saw across our user research was an exhaustion with interacting with bots or other tech company flagging tools that felt like their comments went into an organizational “black hole.” We combat this in two ways to create an interface with more feedback loops and more “human” interactions. For example, after submitting a flag, we have a set of 10+ “thank you messages” that get randomly shown to give a sense of dynamism to the tool. We also make sure to send a notification when anyone has viewed a piece of flagged content, from a journalist to someone on the TED team.

Process takeaways

User research is a full-team activity. 100% of teammates joined at least one research session.

In early prototyping stages, mocks are not precious. Change them often. We live-updated mockups in user interviews.

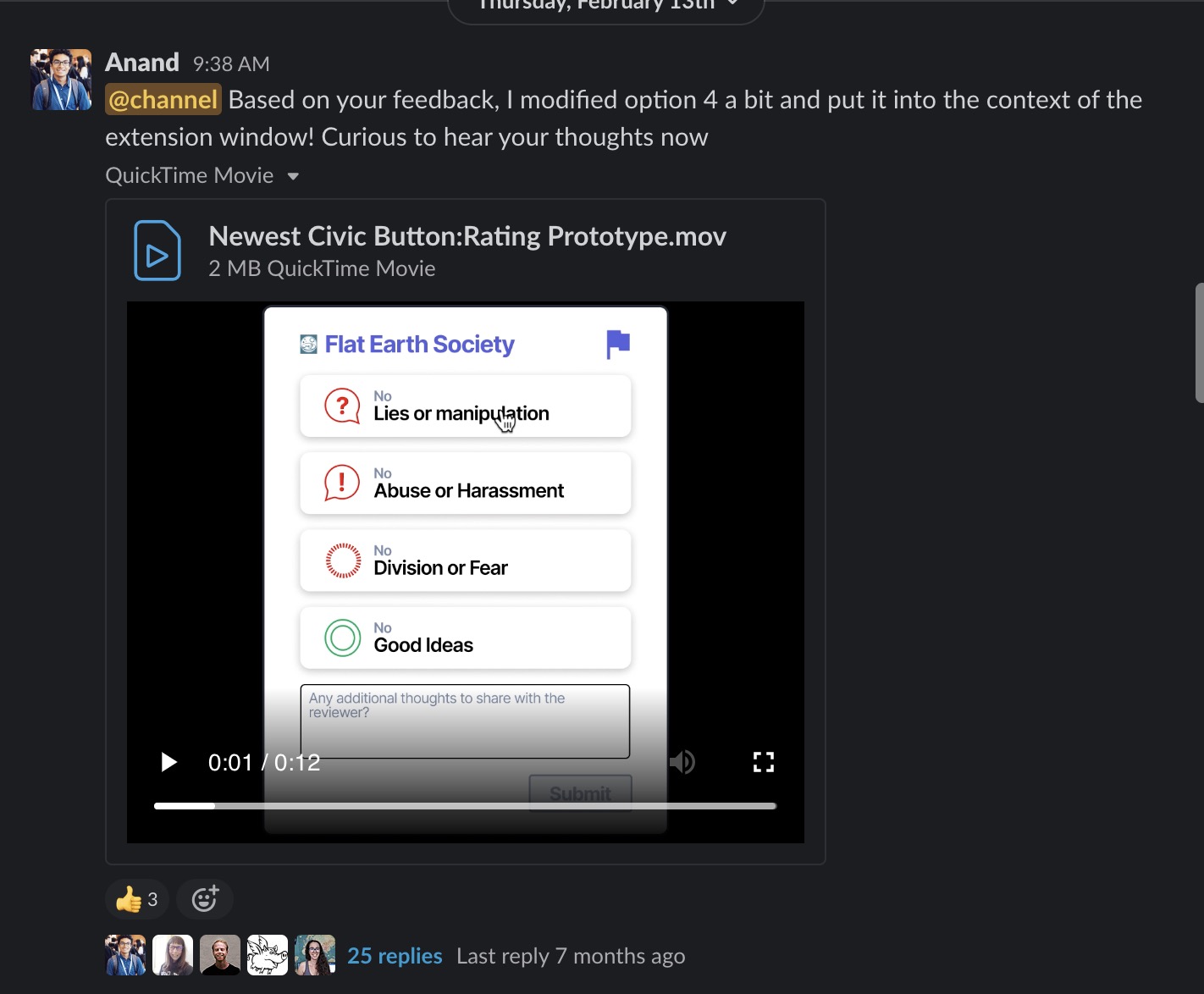

Activate your community to test new ideas. I started and ran a Slack channel with super-supporters to test new ideas and even brand identities.

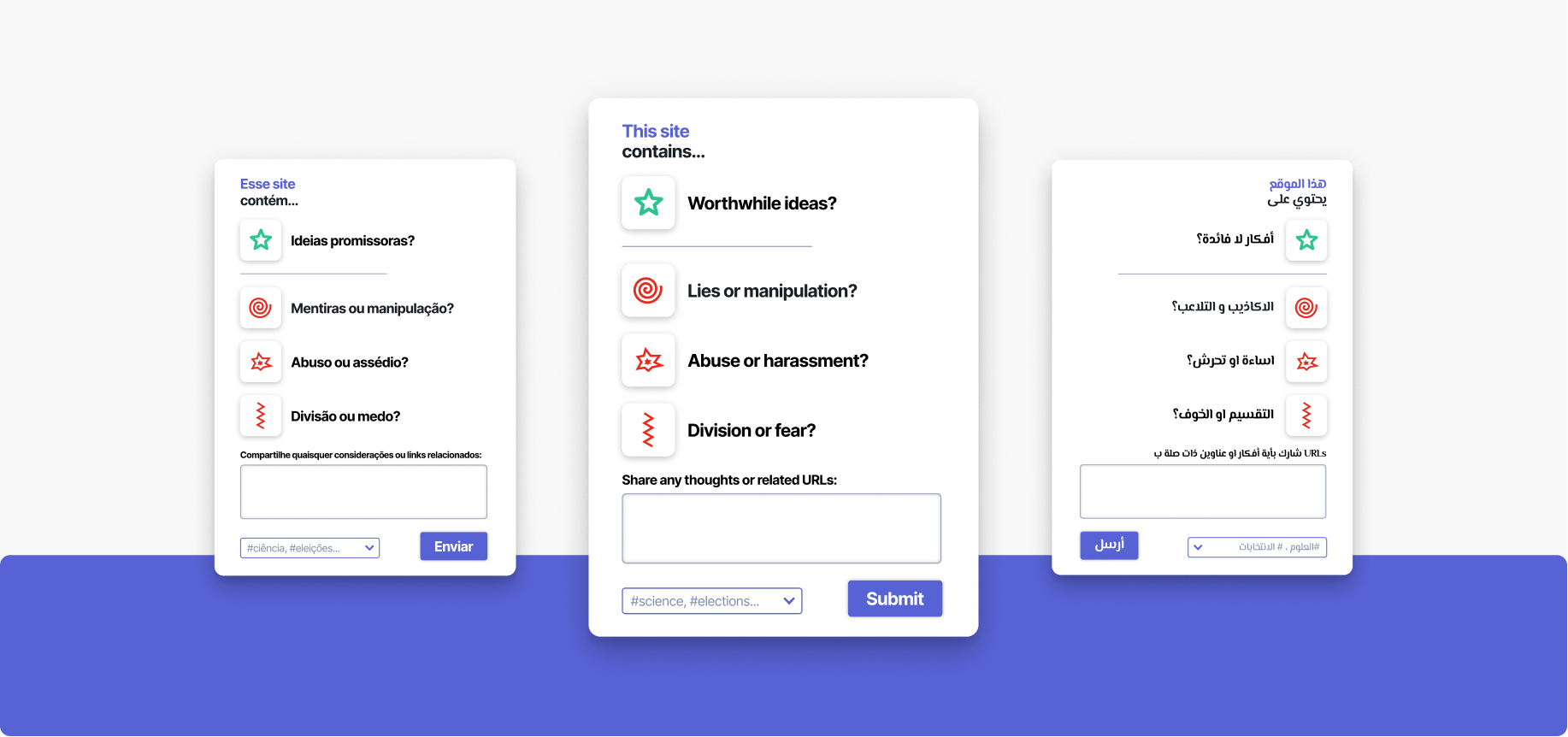

UX Explorations

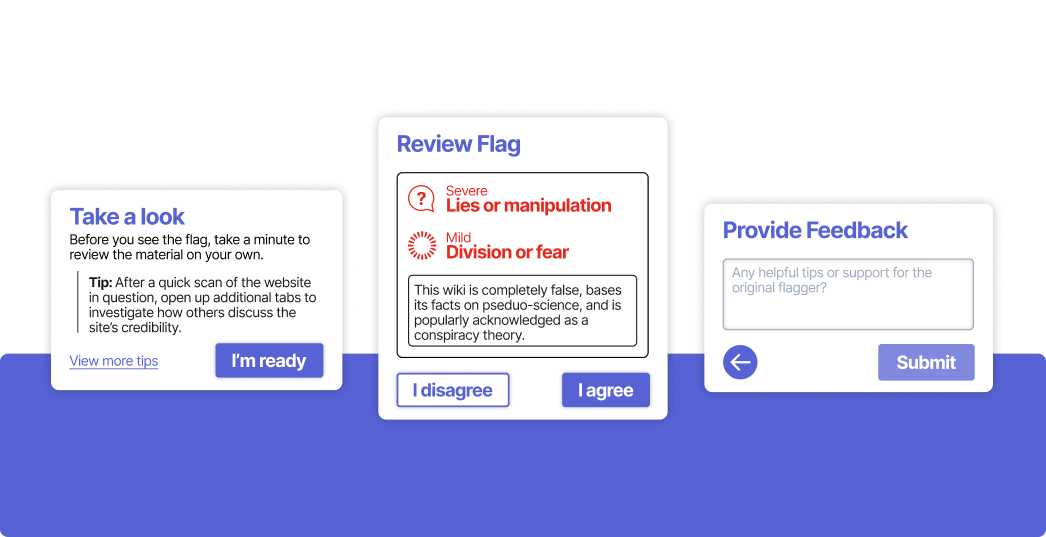

A key tension in this space is gaining quality, deep responses through a complex tool or higher quantity (perhaps less rich) data from a higher quantity of people. Therefore, it was key to be choosey about the information we were collecting and the ease of inputting that. We had the additional constraint of our extension being an overlay on the website's content. Therefore, it was important to optimize for lower screen real estate.

Finalizing the UX

Finally, we landed on the buttwarmer effect. An often unused pattern of clicking a button multiple times to change intensity or power. I then visually indicated the change with a redundant encoding: words and symbols.

Putting it all together

The final, developed onboarding explaining the tool:

In The Real World

This project is an ongoing effort in understanding how crowdsourced systems work, especially applied to the health and care of our internet commons. Next steps include localizing the tool into right-to-left languages like Arabic, refining our team website, and expanding partnerships to more countries.

It's alive!

This project is live! Experience the interfaces for yourself: